I’ve been running a server at home for as long as i can remember. Over the years, the tasks performed by the server has varied greatly, from a complete mail/web/file server, to file/streaming/backup, and in it’s most recent incarnation, streaming/backup.

Somewhere along the way (2008 or so), a Synology NAS snuck in, “hijacked” all our data, and streams to AirPlay and Chromecast devices.

For the past 8 years, my server has been a Mac Mini, replaced every 3-5 years with the latest model. The mini is quiet, has plenty of horsepower for backing up 2TB and streaming movies, and most important, it uses 15 Watts when idle. (30-40 Watts with load)

With the NAS handling storage and streaming, suddenly the server was only responsible for:

- CrashPlan for inbound and outbound backups from the NAS.

- Running a website

- Running UniFi controller

- Running my Mosquitto (MQTT) server for temperature monitoring (more on that later)

- Performing various scheduled tasks

and a rather expensive (hardware and power) solution at that.

Fortunately Edward Snowden came along, and i decided to stop using Cloud services, and instead build my own backup cloud.

I briefly tested out a couple of Raspberry Pi B+ boxes with USB harddrives, but found the network speeds to be somewhat lacking. I assume the 100mbit ethernet, coupled with the shared USB controller severely limits the potential throughput of the RPi.

I ended up purchasing a couple of DS115j boxes, and equipped them with a 2TB 2,5" harddrive. Running at full load they require 7W, or roughly the same as a RPi B+ with a USB drive attached, and they transfer data at around 70-90MB/second.

I then installed a box at home, and one at a friends house - we both have 100mbit fiber connections.

Backups can now be handled by the NAS itself with Duplicity via SFTP. Client machines backup using the excellent Arq software for Mac.

I still had the server running, but had removed the worst load - backups. While the light load would have extended the mini’s lifespan considerably, it was still consuming way more power than i liked.

Enter the Raspberry Pi 2.

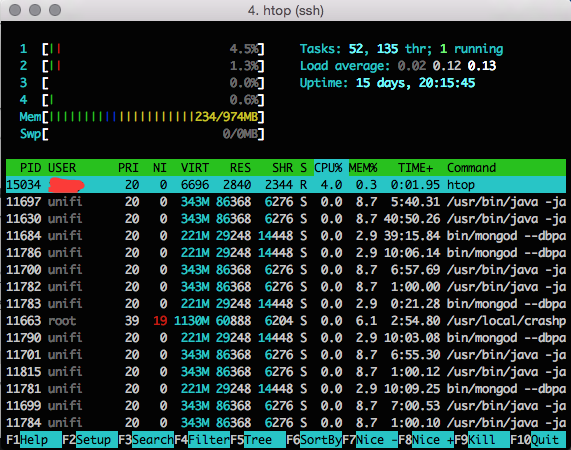

I ordered one as soon as they were announced, and quickly setup my new server. Both CrashPlan and UniFi are java applications, and i was rather worried that i might be a bit short on memory for running two JVMs, but everything just runs smoothly.

Everything is stored on a SSD connected to the USB port. (SSD for lack of moving parts more than speed!)

Why not store data on the NAS ? Because the server is accessible from the internet, it lives on a DMZ VLAN seperate from my regular LAN, and i don’t like the idea of mounting a network share on my NAS from the server.

With everything setup, CrashPlan, UniFi, Samba, Mosquitto, nginx, Duplicity, this is how it normally looks:

My server now consumes 5-6 Watts (including the SSD), and has plenty of power to handle any future requests i may have, and the best part is, in my desk drawer i have another RPi 2 complete with a cloned SD card - so in case of hardware failure i just switch it around and i’m back up and running again.